Search engines play a pivotal role in our daily lives, connecting us to the vast sphere of information on the internet. Ever wondered how these search engines deliver precise and relevant results within a fraction of a second? The secret lies in their complex and ever-evolving search engine algorithms.

Annually, Google introduces a multitude of updates, often with minimal warning, significantly altering the landscape for marketers, both in substantial and subtle ways. Even seasoned professionals with years of experience in paid search find deciphering the intricacies of the search engine algorithm challenging. Grasping the fundamentals not only enables swift adaptation to new changes but also empowers you to proactively ready your campaigns. Understanding the impact of these algorithms on your marketing tactics empowers you to take preventative measures, ensuring they don’t disrupt your strategic plans.

In this blog, we will traverse the intriguing world of search engine algorithms, unraveling the mechanisms that power our online searches.

The Basics of Search Engine Algorithms

Fundamentally, a search engine algorithm is a set of rules and processes designed to determine the order in which results are displayed in response to a user’s query. While the exact search engine algorithms are proprietary secrets closely guarded by companies like Google, Bing, and others

Let’s consider a simplified example to illustrate how search engine algorithms work:

Imagine you’re a librarian (search engine) in charge of organizing a vast library (the internet) with numerous books (web pages). Your goal is to help patrons (users) find the most relevant books based on their queries.

In this analogy, the librarian, analogous to a search engine, dispatches diligent assistants known as crawlers or spiders to traverse the library shelves. These crawlers meticulously examine each book, equivalent to a web page, reading titles and content. Their mission is to collect comprehensive information and construct a comprehensive catalog.

Armed with the data collected during crawling, the librarian proceeds to create an organized catalog, also known as an index. Every book is categorized based on topics, keywords, and perceived relevance. This systematic classification allows for quick retrieval of information when a user initiates a search.

Now, imagine a library patron walking in and posing a query: “Books on modern technology.” Drawing parallels, the librarian consults the index, evaluating various factors. The frequency of the term “modern technology” in books (keyword relevance), the reputation of authors (page authority), and the overall popularity of each book (user engagement) are all considered. The librarian then presents a curated list of books strategically ranked to align with the user’s likely preferences.

Beyond the technicalities, the librarian also takes into account the user experience. The readability and clarity of the books are considered, ensuring that patrons find valuable information in an accessible manner. A positive user experience fosters loyalty, as patrons are more inclined to return to a library that consistently meets their information needs.

Based on this example, all the search engines generally share common elements:

- Crawling: Search engines deploy bots, commonly known as crawlers or spiders, to scour the web and index content. These bots follow links, collecting data on web pages and storing relevant information in vast databases.

- Indexing: The collected data is organized into an index, a structured database that allows the search engine to quickly retrieve information when a user enters a query. Indexing involves categorizing content based on keywords, relevance, and other factors.

- Ranking: The heart of search engine algorithms lies in their ability to rank results. Algorithms evaluate numerous factors to determine the order in which pages appear in search results. These factors include relevance, keyword usage, page quality, user experience, and more.

How do search engine algorithms operate?

Search engines prioritize enhancing the user experience, and Google’s ascendancy as the leading search engine is attributed to its intricate algorithms. These search engine algorithms employ sophisticated tactics to streamline the search process, ensuring users receive the information they seek.

Operating on a plethora of details for context, search engine algorithms consider factors ranging from apparent indicators like content quality to the historical spam record of the website owner.

In determining search results and their sequence, Google employs over 200 ranking factors. Despite adept adjustments, each new update possesses the potential to reset your efforts. While these updates primarily target organic search, their repercussions can extend to paid search with less evident but impactful consequences.

A case in point is the possibility of your ads ceasing to appear for a significant portion of your target audience’s queries due to insufficient specificity on the corresponding landing page.

Key Factors Influencing Search Rankings

a. Relevance: Search engines aim to provide visitors with the most relevant results. Search engine algorithms scrutinize the content of web pages to ensure that it matches the user’s query.

b. Keywords: The strategic use of keywords plays a crucial role. Algorithms consider the presence, frequency, and placement of keywords on a page to assess its relevance.

Also Read: Keyword Research: A Step by Step Guide To Choose The Right Keywords

c. Backlinks: The number and quality of links pointing to a webpage influence its authority and, consequently, its ranking. High-quality backlinks from reputable sources are particularly valuable.

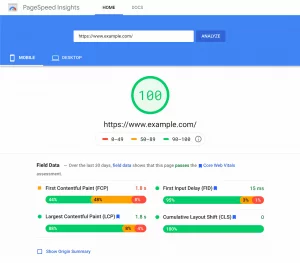

d. User Experience: Search engines prioritize pages that offer a positive user experience. Determinants such as page load speed, mobile-friendliness, and overall usability contribute to a site’s ranking.

e. Content Quality: Engaging, informative, and well-structured content is favored by search engine algorithms. Fresh and regularly updated content also signals relevance.

Search Engine Algorithm Updates

Not all updates are crafted with the same weight. Monitoring every update from Google while maintaining focus on your marketing strategy is a daunting task. Here is a list of search engine algorithm updates:

1. Major Updates

These updates are infrequent and aimed at specific search algorithm issues. The recent Core Web Vitals update, for instance, tackles user experience problems on web pages. These updates are typically released once or twice a year.

2. Broad-Core Updates

This update targets low-quality pages and adjusts the importance of various ranking factors. For example, a shift may prioritize page loading speed over the total number of backlinks. This update typically occurs every 4-5 months.

3. Small Updates

Generally, these updates bring subtle improvements to the searcher’s experience without major visible changes in your site’s performance and analytics. They are implemented more frequently, sometimes on a daily or weekly basis.

4. BERT Update

Source: Google Blog

In 2019, Google introduced a significant search engine algorithm update known as BERT, designed to enhance the search engine’s interpretation of natural, conversational language queries and improve contextual understanding.

Marketers were compelled to shift their focus towards user intent more than ever. Pre-BERT, emphasis on individual keywords was prevalent, but after the update, complete phrases gained increased importance.

As Google continually evolves its algorithms with each update, the overarching goal is to enhance user utility. Unfortunately, for digital marketers, predicting specific changes remains a formidable challenge.

Yet, by grasping the broader intent of improving the searcher’s experience, it becomes feasible to adjust your Search Engine Marketing (SEM) strategy, ensuring it remains resilient amid the impact of new updates.

5. MUM Update

Source: Search Engine Journal

In May 2021, Google unveiled the Multitask Unified Model update, commonly known as MUM. Representing an advanced iteration of the BERT algorithm, MUM harnesses AI to analyze content in a manner akin to human comprehension.

MUM’s primary objective is to tackle intricate search queries that defy resolution with concise snippets. Addressing such queries typically involves an average of eight searches. In response, MUM endeavors to predict these searches and deliver comprehensive answers directly on the first Search Engine Results Page (SERP).

For optimal adaptation of your Search Engine Marketing (SEM) strategy to MUM, consider the following focal points:

- High-Quality Internal Linking: Establishing a robust internal linking system enhances the connectivity of your content, aiding MUM in delivering more accurate results.

- Leveraging Structured Data: Employ structured data to provide MUM with clear and organized information, facilitating its understanding of your content.

- Predicting Complex Queries: Anticipate complex queries within the buyer’s journey, enabling your content to preemptively address user needs and align with MUM’s capabilities.

- Multi-Tiered Content: Create content in multi-tiered structures, breaking it into snippet-friendly fragments that align seamlessly with MUM’s processing.

In essence, major and broad-core updates merit attention, as they have the potential for significant impacts on rankings. However, only a select few possess the strength to create a notable influence on your overall ranking performance.

Mitigating the Negative Impact of Search Engine Algorithm Updates

- Stay Updated: Keep abreast of industry news and updates. Regularly check official announcements from search engines to understand upcoming changes.

- Diversify Strategies: Avoid overreliance on specific tactics. Diversify your SEO strategies, incorporating various elements like content quality, user experience, and technical optimizations.

- Prioritize User Experience: Emphasize a positive user experience. Validate if your website is mobile-friendly, has fast load times, and provides valuable, relevant content.

- Follow Best Practices: Adhere to search engine guidelines and best practices. Avoid black-hat SEO techniques, as they may result in penalties when algorithms update.

- Regular Audits: Conduct regular site audits with best-in-class tools to identify and rectify potential issues. Monitor backlinks, content quality, and technical aspects to ensure compliance with search engine standards.

- Adaptability is Key: Be adaptable and ready to adjust strategies. Algorithm updates may require shifts in focus, such as optimizing for new ranking factors introduced by the update.

- Monitor Analytics: Keep a close watch on website analytics. Detect any sudden drops in traffic or rankings and investigate promptly to identify and address issues.

- Content Quality: Prioritize high-quality content. Algorithms often favor valuable, informative content. Routinely update and refresh your content to maintain relevance.

- Structured Data Usage: Leverage structured data to provide search engines with clear information about your content. This can enhance your visibility and understanding during updates.

- Engage in Ethical SEO: Focus on ethical SEO practices that align with search engine guidelines. Building a trustworthy online presence can help withstand algorithmic changes.

By adopting a proactive and diversified approach to SEO, you can minimize the potential negative impacts of algorithm updates and position your website for long-term success in the ever-evolving digital landscape.

Conclusion

Search engine algorithms are the backbone of the internet, shaping the way we acknowledge and consume information. Understanding the fundamental principles behind these algorithms empowers businesses and content creators to fine-tune their online presence. As search engines continue to persistently refine their algorithms, staying informed about the latest developments is key to navigating the dynamic landscape of online visibility.

FAQs

Why is understanding search engine algorithms important for marketers?

Understanding search engine algorithms is vital for marketers as it allows them to optimize their online presence. By aligning strategies with ranking factors, adapting to updates, and focusing on user experience, marketers can enhance visibility, relevance, and overall success in the competitive digital landscape.

What steps can I take to adjust my SEM strategy for algorithm updates?

For algorithm updates like MUM, focus on high-quality internal linking, leverage structured data, predict complex queries in the buyer’s journey, and create multi-tiered content. Prioritizing user experience helps navigate these changes effectively.

Can algorithm updates impact paid search campaigns?

Yes, algorithm updates can impact paid search campaigns. Changes in ranking factors or user behavior may affect the visibility and relevance of paid ads. Adapting to the evolving search landscape is essential for maintaining campaign effectiveness.